Exploration of Data - Who pays more for healthcare insurance? ~ Data Analytics with R

- Vesna

- Sep 6, 2023

- 7 min read

Updated: Mar 31, 2024

Recently, I took a very engaging course about data analytics; everything from knowing how to read data, clean it, model it effectively, and knowing how to analyze it was covered. It was an intense three-week course, and I believe that my readers would find it interesting as well. Although this article may be longer than my usual posts, it has a lot of helpful information, from the basics, all the way to how I analyze datasets. Also, before I get started, I would like to say that I used a couple datasets that weren’t built in to R; I got them from a great website called “Kaggle” (https://www.kaggle.com/datasets/andrewmvd/heart-failure-clinical-data, https://www.kaggle.com/datasets/mirichoi0218/insurance) which you should definitely check out if you are also interested in analyzing more datasets.

There are so many great coding languages out there, such as Python, JavaScript, HTML, SQL, and more, but for the purpose of creating graphs and analyzing data, this course really focused on R, so that’s what I’ll be using to demonstrate in this article as well. Before I explain the healthcare data, I will go through some of the basic data analysis concepts.

Data is collected observations or measurements represented as text, numbers, or multimedia. It can be collected, analyzed, shared, hacked, bought, and sold. Some examples include numbers, notes, videos, audio, photos, docs, and maps. Data can be quantitative or qualitative. Quantitative data is expressed as a number, and it’s either counted or compared, while qualitative data has textual descriptions, such as maps or pictures. Data can also be categorized as discrete or continuous. Discrete data is data that doesn’t change or data that has a limited value, such as how many employees work for you. Continuous data is date that continuously changes, such as time or how old you are.

Data Visualization is a big part of being able to analyze data effectively. Infographics utilize design strategies and pre-attentive attributes to make sure data is easy to read and understand. Pre-attentive attributes are information that can be processed visually almost immediately (such as size, color, and orientation) and it makes it easy to find patterns and trends in large datasets.

Before you start coding, you must import some libraries so you have more functions that you can use in your code. For example, the ggplot2 library increases functionality for making graphs by adding colors and other different features to the graph, such as labels, that make it easier to analyze. This is what it may look like:

Some examples of useful graphs that help to visualize data include bar graphs, box plots, scatter plots, and pie charts. I will be using the built in Iris dataset in R to demonstrate the code to create each graph and what they look like. I will also be utilizing the ggplot2 library to bring my graphs to the next level.

Bar graphs are some of the easiest graphs to create and read. It is easy to show relative sizes as the bars for each category are right next to each other. The geom function to create bar graphs is geom_bar(). Box plots (also called box and whisker plots) show the five number stat summary of a dataset on a easy to read graph. They can easily show outliers, with the “whiskers” and show the average and IQR in the box. The geom function is geom_boxplot(). Scatter plots, also called dot plots show if there is a relationship between the x and y values, and it is easy to show if there is a linear relationship, which becomes useful in predictive modeling. They are very simple to read and hard to misunderstand. The geom_point() function is what adds the dots to the graph. Below are examples of all the graphs listed above using the built in Iris dataset.

Another part of analyzing data is being able to see a messy dataset and being able to clean it and use it effectively. I found two big data sets off of Kaggle that we can use to clean and graph using the above data visualization techniques that I discussed above. Kaggle is a website where people can make, share, and use public data sets for learning purposes. I would encourage you to check that out and find a dataset that interests you to analyze. The first data set I found was relatively larger but will be easy to make graphs out of, while the other is on the smaller end, but will be useful when we get into predictive modeling. Prior to cleaning the first dataset is about 1340 rows and 7 columns. It’s about insurance charges depending on where you live, what health problems you have, how many kids you have, your gender, age, and whether you smoke or not. You may already be able to predict how different factors contribute to increased healthcare charges, but we’ll get into that later. The second dataset clocks in at about 300 rows and 13 rows, and it’s about different medical conditions that may result in heart failure.

As you can see, these are very big datasets and would take a lot of time and energy to clean one by one, so we’re going to go over some cleaning and filtering techniques that will greatly help. Some things to look out for when cleaning data include missing values, duplicate data, inconsistent data, and outliers. Missing values may occur if some data was left unrecorded to the surveyor skips a question. Duplicate data can occur if the same person answers the survey multiple times, which will mess up averages and general trends. Inconsistent data has typos or different labels. For example, if there is a time, and everything is in hours except for one row that is in minutes, that would be inconsistent. Finally, outliers are data that doesn’t follow a general trend. They can be a typo, a data conversion error, or even valid data.

Let’s start with finding duplicate values. The columns in the insurance claim dataset are age, gender, BMI, children, smoker, region, and charge amount. In my opinion the most unique column would be how much you paid, or the charge amount, so I’ll check that column to check if there are duplicate values. In excel, I select that column, click on the Home tab, click the drop down for Conditional Formatting, click the carrot for Highlight Cells Rules, and check for duplicate values. Excel then goes in and highlights the boxes in that column that repeat in red. That helped me find out that I had one repeating row: it came up at row 197 and again at row 583, so I just deleted the second one.

The dataset doesn’t have any labels so I don’t have to worry about inconsistent data. We’ll only be able to figure out outliers and missing values once we import into our R database and graph the dataset, so let’s get started on that.

Prior to starting anything, I made sure to use the function is.na in my console to make sure there are no missing values. Once I was sure there was no missing values, I got started on making a graph to represent each dataset. For the insurance claims dataset, one thing I want to know is whether there is any difference between whether men or women have greater healthcare needs. So, I decided to plot a scatter plot that will show me how much each man/woman had to pay all in one graph. The function I used to plot the points was geom_jitter(), and the result is below:

From this graph, I can see that it’s about the same distribution, and that the majority of men and women have had to pay healthcare charges of between 1,000 to 13,000 dollars. Another thing I want to know is whether BMI (Body Mass Index) has an impact on how much you have to pay in healthcare. So, I’ll change the x-value to BMI, and plot that graph as well. I used the same scatter plot format, so the code stayed almost the same.

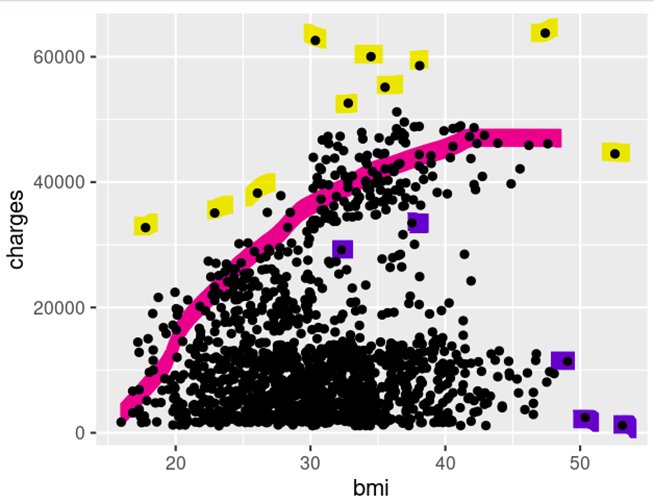

Woah, that graph looks so much different from the gender graph. Let’s take a moment to analyze what this means. There’s a cluster of data points around where the BMI is 20 to 40, so I can automatically say that the majority of surveyors were in that BMI and had about the same healthcare charges. However, there is also another upward trend above that, highlighted in pink, in the picture below.

Highlighted in yellow, there are some outliers that lie above the pink trend line. There’s some values in the 10 – 20 BMI range and it just gets higher as the BMI goes up. There’s also some low outliers (highlighted in purple) that are interesting to me, because there in the 50 BMI range, but the charges are really low too. In conclusion, I would say that in general the charges do go up, but it depends on the specific person.

Now let’s move on to the heart failure dataset. After checking for missing values (there were none), one thing I want to know is whether men or women are more likely to die of heart failure. I decided to use a bar graph, where the x axis is whether they were a man or woman, and the y axis is how many people of each gender died of heart failure. Below is the code and the result of the graph.

One interesting thing about this dataset that reflected in the graph (other than the answer to my question), was that the gender was described in binary, 0 meaning female, and 1 meaning male. This was new to me at first, but I soon realized that graphing it did not make a difference: the axis labels were just numbers instead of descriptions. As you can see however, according to this dataset, more men than women died of heart failure. This is probably more correlation than causation; there are probably other factors that led to this, and this is probably just a coincidence. To confirm my hypothesis, I made a few more graphs, but instead of plugging in gender for the x axis, I decided to use other factors such as anemia, diabetes, high blood pressure, and smoking. Below are the results for each. (Side note: For this dataset, 0 is true and 1 is false.)

For all these graphs, we can see that although the difference between people with the health condition and those without, the former group still did have a higher chance of heart failure. This also proves that (at least in this dataset) gender did not have a cause in heart failure.

Predictive Modeling is a method used to predict future behavior of a dataset, using graphs or statistics. It works by analyzing already collected data and generating a model to help predict future outcomes. When you decide to utilize predictive modeling, it’s important to split your original data into two parts: testing and training data. Training data is the data used to train your model to make effective predictions, and testing data is the data you use to test your model to make sure it’s making accurate predictions. We won’t dive into this yet however, so make sure to keep an eye out for my next part, where we get into making predictive models and analyzing the results!

Remember to like this post, rate it, and comment down below!

Excellent nice post..

Excellent and more informative about the blog....keep going

Nice post.